In our new article, we tried to find new answers to an old question: Are word meanings “the same” in the brain, whether we hear a spoken word or lip-read the same word by watching a speaker’s face? After more than 4 years of work (I tested the first participant in August 2016), this study now found a great home in the journal eLife.

We asked our participants to do the same task in two conditions: auditory and visual. In the auditory condition, they heard a speaker say a sentence. In the visual condition, they just saw the speaker say the sentence without sound (lip reading). In both conditions, they then chose from a list of four words, which one they had understood in the sentence.

In the auditory condition, the speech was embedded in noise so that participants would misunderstand words in some cases (on average, they understood the correct word in 70% of trials).

In the visual condition, performance was also on average 70% correct. But lip reading skills vary extremely in the population and this is something we also saw in our data: the individual performance in the lip reading task covered the whole possible range (from chance level to almost 100% correct). Needless to say, our participants were all proficient verbal speakers (mostly college students). Quite some time ago, the idea came up that the variability in lip reading reflects something other than normal speech perceptual abilities. Is it therefore possible that the processing of auditory and visual words is completely different in the brain?

To answer this question, we recorded our participants’ brain activity while they did the comprehension task. We used the magnetoencephalogram (MEG), which detects changes in magnetic fields outside the head that are produced by neural activity.

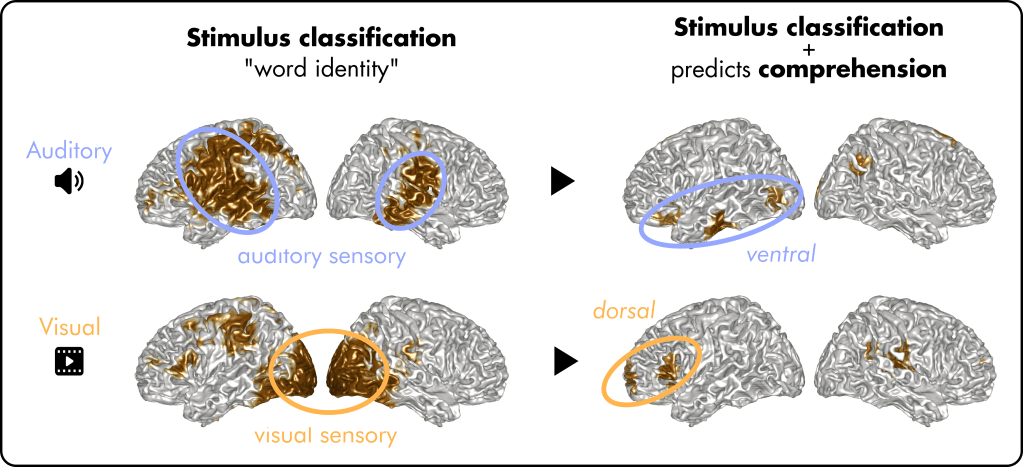

To analyse the brain’s activity during the perception of auditory and visual words, we used a classification approach: First, we tried to reconstruct which word participants had perceived by comparing their waveform patterns in the brain (stimulus classification, or decoding). Second, we analysed which of the classification patterns we found predicted whether participants actually perceived the correct word.

In a nutshell, two main findings emerged:

- Areas that encode the word identity very well (e.g. sensory areas) often do not predict comprehension. Looking at it from the other angle, the areas that encode the word sub-optimally (e.g. higher order language areas) influence what we actually perceive. This is true for auditory and visual speech.

As once pointed out by Hickok & Poeppel, we think that the task we perform is the key that determines the results we get – and which areas are most relevant for our behaviour. In our case, higher-order language areas are most important for comprehension. But if the task was to discriminate speech sounds or lip movements, early sensory areas would probably be more task-relevant.

2. The representations for auditory and visual word identities are largely distinct. They only converge in a couple of areas, situated in the left frontal cortex and the right angular gyrus (green circles below). These areas might therefore hold some kind of a-modal perceived meaning of a word.

Previous studies have often looked at brain activation across the brain using fMRI (functional magnetic resonance imaging). Activation means that something is “happening” in a brain area (leading to increased oxygen demands there). These studies usually suggest that the processing of acoustic and visual speech overlap to a large extent.

But the nature of these activations can be unclear. We think that the activation of a general language network could explain such findings, without necessarily representing specific word identities. Moreover, other studies often use categories (for example, buildings vs animals) instead of single word meanings, which could give a different picture.

Overall, our analysis of specific word identities (meanings?) shows that our brain does very different things when we listen to someone speak or when we try to lip read. This could explain why our ability to understand acoustic speech is usually not related to our ability to lip read.

Please note that this is an updated version of an earlier blog post on the preprint of the same study. Data for this study can be found here.